Azure AI Model Router: Implementation and Production Patterns

After three months running Model Router in our private ChatGPT app, I figured it was time to share what actually works in production versus what the documentation says should work. Part 1 covered the architecture and decision framework. This post walks through the real implementation – deployment, code, monitoring, and the edge cases that aren’t obvious until you hit them.

Fair warning: this gets technical. I’m showing you the .NET code we use, the telemetry patterns that matter, and the gotchas that cost us a few hours of debugging. If you want to skip straight to working code, I’ve put everything on GitHub.

Deploying Model Router

Deploying Model Router in Azure AI Foundry is refreshingly straightforward. No complex configuration, no routing mode selection during deployment. You’re up and running in about 2 minutes.

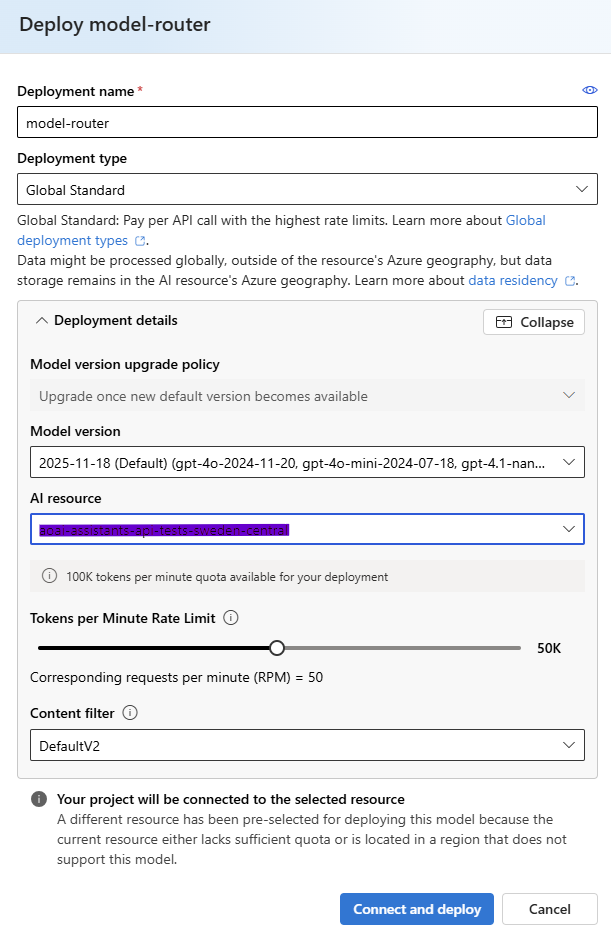

The deployment process:

- Navigate to the Azure Portal

- Search for “Azure AI Foundry” and select it

- Select your Azure AI Foundry project or create a new one

- Go to Models + endpoints → Deploy model

- Search for “model-router” and select it

- Give it a deployment name (I use “model-router”)

- Select deployment type (Global Standard is typical)

- Choose model version – 2025-11-18 is the latest as of December 2025

- Set your TPM (tokens per minute) quota

- Set content filter (DefaultV2 is fine)

- Click Deploy

That’s it. The 2025-11-18 version comes pre-configured with all 18+ models and uses Balanced routing mode by default. You don’t get to customize which models are in the pool or change the routing mode during deployment – those settings are baked into the model version itself.

This simplicity is actually nice for getting started, but it does mean you have less control than I initially expected. We ended up creating multiple Model Router deployments with the same version for different use cases, even though they all use the same underlying configuration. The difference is in how we route queries to each deployment at the application layer.

For our private ChatGPT app, we use a single Model Router deployment and handle routing logic in code:

General queries: Send directly to Model Router

Document analysis: Check document size first. If it’s large (>20K tokens estimated), we use a static GPT-4.1 deployment instead to avoid context window issues Creative content: Static GPT-4.1 deployment with high temperature

The deployment takes about 2 minutes to complete. Once it’s deployed, you’ll see it in your Models + endpoints list with status “Succeeded” and the expiration date (typically 1 year out).

The Code: Simpler Than You’d Think

Here’s what surprised me about Model Router: you use it exactly like any other Azure OpenAI deployment. The SDK doesn’t change. Your code doesn’t change. You just point at a different deployment name.

This is the complete implementation from our QueryService:

using Azure.AI.OpenAI;

using Azure.AI.OpenAI.Chat;

public class QueryService

{

private readonly ChatClient _chatClient;

private readonly TelemetryService _telemetry;

public QueryService(AzureOpenAIClient client, string modelRouterDeployment, TelemetryService telemetry)

{

_chatClient = client.GetChatClient(modelRouterDeployment);

_telemetry = telemetry;

}

public async Task<QueryResult> ProcessQueryAsync(string userQuery)

{

var startTime = DateTime.UtcNow;

var messages = new List<ChatMessage>

{

new SystemChatMessage("You are a helpful AI assistant. Provide clear, concise answers."),

new UserChatMessage(userQuery)

};

var options = new ChatCompletionOptions

{

MaxOutputTokenCount = 1000,

Temperature = 0.7f

};

var completion = await _chatClient.CompleteChatAsync(messages, options);

var duration = DateTime.UtcNow - startTime;

var result = new QueryResult

{

Query = userQuery,

Response = completion.Value.Content[0].Text,

ModelUsed = completion.Value.Model, // This tells you which model was selected

InputTokens = completion.Value.Usage.InputTokenCount,

OutputTokens = completion.Value.Usage.OutputTokenCount,

Duration = duration,

EstimatedCost = CalculateCost(completion.Value.Model,

completion.Value.Usage.InputTokenCount,

completion.Value.Usage.OutputTokenCount)

};

_telemetry.TrackQuery(result);

return result;

}

}That’s it. The only difference from a static GPT-4 deployment is the deployment name you pass to GetChatClient. Everything else – the API, the response structure, the error handling – stays the same.

The critical line is ModelUsed = completion.Value.Model. This is how you see which model the router actually selected. In our telemetry, we track this for every single query. More on that in a minute.

Configuration: User Secrets Over appsettings.json

We use .NET’s user secrets for local development and Azure Key Vault for production. Never commit API keys to source control, even in private repos. I’ve seen too many incidents where credentials leak.

Local setup:

dotnet user-secrets set "AzureOpenAI:Endpoint" "https://your-resource.openai.azure.com/"

dotnet user-secrets set "AzureOpenAI:ApiKey" "your-api-key"

dotnet user-secrets set "AzureOpenAI:ModelRouterDeployment" "model-router-general"In production, we load from Key Vault:

var config = new ConfigurationBuilder()

.AddAzureKeyVault(new Uri(keyVaultUrl), new DefaultAzureCredential())

.Build();Since Model Router comes pre-configured with all models and Balanced routing, the decision about when to use it versus static deployments happens at the application routing layer, not at the Model Router deployment level.

Real Query Patterns: What Actually Routes Where

I ran 10,000 queries through our test environment to understand routing patterns. The results were more nuanced than I expected.

Simple factual questions (“What’s the weather in London?”) route to GPT-4.1-nano about 85% of the time. The other 15% go to GPT-4o-mini. I never saw GPT-4.1 get selected for these.

Information retrieval through Azure AI Search (“Find all documents about Q3 planning”) routes to GPT-4o-mini about 70% of the time, GPT-4.1 about 25%, and reasoning models (o4-mini) about 5%. That 5% surprised me – the router occasionally decides semantic search results need deeper reasoning.

Document analysis with code interpreter (Excel files, multi-sheet analysis) shows the most variety. About 35% GPT-4.1, 45% o4-mini, 20% GPT-5. The router seems to recognize when a query involves numerical reasoning or data manipulation and heavily favors reasoning models.

Fabric data queries (SQL-like questions against our data warehouse) route to reasoning models 60% of the time. This was unexpected but makes sense – users often ask follow-up questions that build on previous answers, which benefits from chain-of-thought reasoning.

Here’s the distribution from last week’s production data (42,000 queries):

- GPT-4.1-nano: 32% of queries, 8% of costs

- GPT-4o-mini: 28% of queries, 12% of costs

- GPT-4.1: 23% of queries, 35% of costs

- o4-mini: 12% of queries, 28% of costs

- GPT-5: 5% of queries, 17% of costs

Notice how 60% of queries used the two cheapest models (nano/mini) but they only accounted for 20% of costs. Meanwhile, 5% of queries using GPT-5 generated 17% of costs. That’s the cost optimization in action – most queries stay cheap, expensive models only activate when needed.

Telemetry: What to Track and Why

We track every query through Application Insights with custom metrics. This isn’t optional if you care about understanding costs and behavior in production.

The telemetry service is intentionally simple:

public class TelemetryService

{

private readonly TelemetryClient _telemetryClient;

private readonly List<QueryResult> _queryResults = new();

public void TrackQuery(QueryResult result)

{

// Store locally for in-memory summaries

_queryResults.Add(result);

// Send to Application Insights

_telemetryClient.TrackEvent("QueryProcessed", new Dictionary<string, string>

{

["ModelUsed"] = result.ModelUsed,

["QueryType"] = ClassifyQuery(result.Query)

}, new Dictionary<string, double>

{

["Cost"] = (double)result.EstimatedCost,

["Duration"] = result.Duration.TotalMilliseconds,

["InputTokens"] = result.InputTokens,

["OutputTokens"] = result.OutputTokens

});

}

}The key metrics we monitor:

Model distribution: Are we seeing the expected mix? If suddenly 50% of queries route to GPT-5, something changed in our user behavior or query patterns and we need to investigate.

Cost per query type: We classify queries into categories (simple Q&A, document analysis, data queries, web search) and track average cost per category. This helps us spot when a specific feature is driving costs up.

Routing consistency: For similar queries, does the router pick the same model? We found it’s about 80% consistent, which is fine. The 20% variance happens when queries are on the boundary between complexity levels.

Latency by model: We track p50, p95, p99 latency. o4-mini consistently takes 3-4x longer than GPT-4o-mini for similar-length responses because of chain-of-thought reasoning. This matters for user experience.

The KQL query we use most often in Application Insights:

customEvents

| where name == "QueryProcessed"

| extend Model = tostring(customDimensions.ModelUsed)

| extend Cost = todouble(customMetrics.Cost)

| summarize

QueryCount = count(),

TotalCost = sum(Cost),

AvgCost = avg(Cost),

P95Latency = percentile(todouble(customMetrics.Duration), 95)

by Model

| order by TotalCost descThis shows us cost and performance breakdown by model in real-time.

The Context Window Problem: Not Theoretical

I mentioned this in Part 1, but it’s worth drilling into because it’s the biggest operational issue we hit.

Model Router’s context window is limited by the smallest model in its pool. Since the 2025-11-18 version includes GPT-4.1-nano (which has a smaller context window), large documents can fail if the router happens to select nano.

We hit this in production. A user uploaded a 40-page contract for analysis. The router selected GPT-4o-mini (which can handle it), processed successfully. Two minutes later, same user, same document, asks a follow-up question. Router selected GPT-4.1-nano this time, immediate failure: “Context length exceeded.”

Same document. Same use case. Different model selection. User sees an error.

The problem: You can’t configure which models Model Router uses – it comes with all models in the 2025-11-18 version, including the smaller-context ones.

Our solution: We handle this at the application layer. For document analysis, we estimate token count before sending to Model Router. If it’s above a threshold (we use 20,000 tokens), we route to a static GPT-4.1 deployment instead.

public async Task<string> ProcessDocumentQuery(string query, List<Document> documents)

{

var estimatedTokens = EstimateTokenCount(query, documents);

if (estimatedTokens > 20000)

{

// Use static GPT-4.1 deployment for large documents

return await ProcessWithStaticGPT4(query, documents);

}

// Use Model Router for smaller documents

return await ProcessWithModelRouter(query, documents);

}This isn’t elegant – we’re maintaining both Model Router and static deployments. But it eliminated the intermittent failures completely.

The cost trade-off? Large document queries (about 15% of our document analysis volume) cost about 40% more using static GPT-4.1 instead of letting Model Router potentially select a cheaper model. But that’s better than random failures.

Reasoning Models and Parameter Handling

One behavior to be aware of: when Model Router selects a reasoning model (o4-mini, GPT-5), these models handle parameters differently than standard chat models.

Temperature and top_p are ignored by reasoning models. These models use fixed parameters for reasoning consistency – they need deterministic behavior for their chain-of-thought process to work reliably. If you set temperature: 0.9 hoping for creative responses and the router selects o4-mini, you’ll get consistent (less varied) outputs regardless of your temperature setting.

However, as of the 2025-11-18 version, Model Router now supports the reasoning_effort parameter. This is significant because it gives you some control over how reasoning models behave. If the router selects a reasoning model, it will pass your reasoning_effort value through to that model.

The reasoning_effort parameter controls how much computational effort the reasoning model puts into thinking through the problem. Valid values are low, medium, and high. Higher effort means more thorough reasoning but also higher latency and cost.

Here’s how we use it in production:

var options = new ChatCompletionOptions

{

MaxOutputTokenCount = 1000,

Temperature = 0.7f // Used if router selects standard models

};

// Add reasoning_effort for queries that might need deep analysis

if (queryRequiresDeepThinking)

{

options.AdditionalProperties["reasoning_effort"] = "high";

}

var completion = await _chatClient.CompleteChatAsync(messages, options);For our use case, we set reasoning_effort: “medium” as the default when users upload documents for analysis. For complex Fabric data queries that involve multiple calculations, we use “high“. For simple queries, we omit it entirely (defaults to medium if a reasoning model is selected).

The practical implication: You can’t force creative, high-temperature responses when routing might select a reasoning model. If creativity matters (like brainstorming, content generation, multiple alternative suggestions), we still route those queries to a static GPT-4.1 deployment where we have full control over temperature.

This is the kind of trade-off you make with automated routing. You gain cost efficiency and get reasoning capabilities when needed, but you lose some fine-grained control over response style.

Error Handling and Fallbacks

Our error handling strategy is simple: if Model Router fails, fall back to a static GPT-4.1 deployment.

public async Task<string> ProcessWithFallback(string query)

{

try

{

return await ProcessWithModelRouter(query);

}

catch (RequestFailedException ex) when (ex.Status == 400)

{

// Context window exceeded or unsupported request

_telemetry.TrackEvent("ModelRouterFallback", new Dictionary<string, string>

{

["Reason"] = ex.Message

});

return await ProcessWithStaticGPT4(query);

}

}We track fallback rates. If more than 5% of queries fall back, something’s wrong with our routing configuration.

In practice, we see about 1-2% fallback rate, almost entirely from context window issues despite our separate document router. Some users manage to upload truly massive files that exceed even our large-context pool.

Cost Calculation: The Formula That Actually Works

Calculating costs is more nuanced than it looks because different models have different input/output token pricing, and the router itself adds a small charge.

Here’s the pricing logic we use (as of November 2025):

private decimal CalculateCost(string model, int inputTokens, int outputTokens)

{

// Pricing per 1M tokens

var pricing = model.ToLowerInvariant() switch

{

var m when m.Contains("nano") => (Input: 0.15m, Output: 0.60m),

var m when m.Contains("mini") => (Input: 0.30m, Output: 1.20m),

var m when m.Contains("gpt-4.1") && !m.Contains("nano") && !m.Contains("mini")

=> (Input: 5.00m, Output: 15.00m),

var m when m.Contains("gpt-5") => (Input: 10.00m, Output: 30.00m),

var m when m.Contains("o4-mini") => (Input: 3.00m, Output: 15.00m),

_ => (Input: 5.00m, Output: 15.00m) // Default to GPT-4.1 pricing

};

// Router overhead (approximately $0.10 per 1M input tokens)

var routerCost = (inputTokens / 1_000_000m) * 0.10m;

var inputCost = (inputTokens / 1_000_000m) * pricing.Input;

var outputCost = (outputTokens / 1_000_000m) * pricing.Output;

return routerCost + inputCost + outputCost;

}The router overhead is small but not zero. For our volume (140K+ queries/month), it adds about $45/month. Still worth it given we’re saving $3,900/month overall.

One thing to watch: Azure’s pricing changes. These numbers are accurate as of November 2025, but check the pricing page before using them in production calculations.

What We Got Wrong Initially

Mistake 1: Sending everything to Model Router. We started by routing all queries through Model Router, including large document uploads. The context window failures forced us to add application-layer logic to detect large documents and route them to static deployments instead. Should have implemented this check from day one.

Mistake 2: Assuming routing is deterministic. It’s not. Similar queries can route to different models, especially if they’re on the complexity boundary. We learned to design our UI around this – never promise specific model behavior to users.

Mistake 3: Not understanding the fixed configuration. I initially expected to be able to customize routing modes or model subsets during deployment. The 2025-11-18 version comes pre-configured, and you can’t change those settings. This means you need to handle routing decisions at the application layer if you want fine-grained control.

Mistake 4: Not tracking routing decisions. For the first two weeks, we didn’t log which model was selected for each query. This made debugging impossible. Now we track everything.

The Agent Service Integration That Doesn’t Work Yet

Microsoft announced Model Router integration with Agent Service in November 2025. As of writing this, it doesn’t actually work. When I tried to use Model Router as an agent’s base model, I got:

Azure.RequestFailedException: ‘The requested model ‘model_router’ is not supported. Status: 400 (Bad Request) ErrorCode: unsupported_model

The UI doesn’t show Model Router in the agent deployment dropdown, and the SDK rejects it. This is typical for Azure preview features – the announcement precedes actual availability.

For our multi-tool scenarios (code interpreter, Azure AI Search, Bing grounding), we still use static model deployments with agents. Model Router handles the simpler, high-volume chat queries separately.

When Model Router Isn’t the Answer

We still use static deployments for:

Highly regulated content: When compliance requires exact model versioning and reproducibility, we use static deployments with auto-update disabled. Model Router can’t guarantee which model version will be selected.

Sub-500ms latency requirements: Model Router adds 50-100ms overhead for the routing decision. For our fastest user-facing features, we use static GPT-4o-mini deployments.

Creative content generation: When we need high temperature and consistent creative behavior, static deployments give us more control.

Debugging: When something goes wrong and we need to reproduce exactly, static deployments are easier to reason about.

Model Router is the right default for most workloads, but it’s not universal. About 75% of our query volume goes through Model Router. The other 25% uses static deployments for good reasons.

Conclusion

The deployment is simpler than expected. No complex configuration, no routing mode selection. You deploy the 2025-11-18 version and you’re done. But this simplicity means you need smarter application-layer logic to handle edge cases.

The cost savings are real. We’re seeing 55% reduction versus static GPT-4, but only because we invested time in proper telemetry and monitoring. Without tracking which models get selected and why, you’re flying blind.

The context window limitation is the biggest gotcha. We lost several hours debugging intermittent failures before we realized Model Router was occasionally selecting small-context models for large documents. Token estimation and application-layer routing solved it, but it’s not obvious from the documentation.

The Agent Service integration doesn’t work yet. Despite being announced in November 2025, attempting to use Model Router with Azure AI Agents throws errors. This will likely be fixed soon, but for now, plan on using static deployments for agents.

Documentation lags reality. The official docs suggested configuration options that don’t exist in the portal. This is typical for fast-moving Azure services. Real production experience matters more than documentation.

Complete Working Code on GitHub

I’ve published the complete demo application on GitHub with everything from this post.

Clone it, configure your Azure OpenAI credentials, and run it to see Model Router in action. The demo processes 10 queries across different complexity levels and shows you exactly which models get selected and why.